AI Girlfriends & Mental Health: The Truth Hidden in the Fine Print

Some links are affiliate links. If you shop through them, I earn coffee money—your price stays the same.

Opinions are still 100% mine.

It’s impossible to scroll through social media lately without seeing an ad for an AI companion. They promise a non-judgmental ear, a partner who’s always there, and a cure for the modern epidemic of loneliness. As someone fascinated by the intersection of technology and human connection, I decided to dive into the world of AI girlfriends and their impact on mental health.

The ads are compelling, but as I explored these apps, a critical question emerged: How much of this is genuine support, and how much is just clever marketing? I wanted to look past the glossy app store descriptions to scrutinize how these AI companion services present their therapy-style features and, more importantly, what their disclaimers reveal. What I found was a concerning gap between what’s promised and what’s legally stated.

What Exactly is an AI Girlfriend?

First, let's define our terms. An AI girlfriend is a highly advanced chatbot, often with a customizable avatar, designed to simulate a romantic partner. Using complex large language models (LLMs), they can hold surprisingly human-like conversations, remember past details, and adapt to your personality. The goal isn't just to answer questions; it's to create a sense of genuine connection and companionship. Popular platforms in this space include Nomi.ai, Kupid.ai, and candy.ai.

The Appeal: A Digital Shoulder to Lean On

The appeal is undeniable. In a world where many of us feel isolated, the idea of a 24/7 companion offering unconditional support is incredibly attractive. For people dealing with social anxiety or limited opportunities to connect, these apps can feel like a lifeline.

Based on user testimonials and my own research, the potential for a positive impact on mental well-being is significant.

| Potential Benefit | How It Helps |

|---|---|

| Reduced Loneliness | Provides constant, on-demand companionship, helping to ease feelings of isolation. |

| Non-Judgmental Space | Offers a safe outlet to express thoughts and fears without the risk of criticism or social fallout. |

| Coping Mechanism Practice | Some apps guide users through mindfulness or stress-reduction exercises rooted in real therapeutic techniques. |

| Social Skills Practice | Allows users to practice conversational skills in a low-stakes environment, potentially building confidence for real-life interactions. |

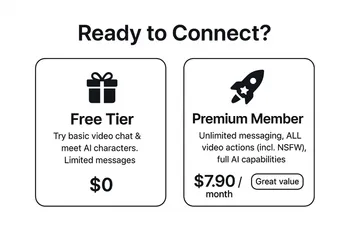

| Accessibility | A much cheaper and more readily available option than traditional therapy. |

Many users find these companions to be incredible tools for self-reflection and processing emotions. It’s clear that for some, the benefits of AI companionship are very real.

The Blurry Line: When Marketing Sounds Like Therapy

This is where things get complicated. Many of these apps don't just market themselves as fun companions; they use language directly associated with mental healthcare. I saw countless examples of AI therapy marketing claims.

For instance, one app, Romantic AI, promises to "maintain your MENTAL HEALTH." Another, Replika, is advertised as a "companion who cares." This language is intentionally designed to attract users seeking genuine emotional and mental support. Some apps even claim to use techniques from established therapies like Cognitive Behavioral Therapy (CBT), creating the impression of a clinically-backed tool.

The American Psychological Association has raised flags about this, warning that chatbots impersonating therapists can be misleading. While they can be supportive, they are not a replacement for a trained professional. This led me to the most important part of my investigation: the fine print.

The Reality Check: What the Disclaimers Are Really Saying

When I started digging into the Terms of Service for these apps, I found a stark contradiction. The same services that market themselves as mental health aids have legal disclaimers that say the exact opposite.

Here’s what the common mental health disclaimers in AI apps really mean:

- "For entertainment purposes only." This means that despite any health-focused marketing, the company legally defines its product as a game or toy, not a health tool.

- "NOT a substitute for professional medical advice." They are explicitly telling you not to use their service for real mental health crises or as a replacement for a doctor or therapist.

- The service is provided "as is." This means there is no guarantee of quality, effectiveness, or that the AI will provide helpful or even safe responses.

- The company is not liable for any harm. This clause protects the company from legal responsibility if a user's mental health worsens or they suffer damages as a result of using the app.

For example, Romantic AI's terms state they make "NO CLAIMS... THAT THE SERVICE PROVIDE (sic) A THERAPEUTIC, MEDICAL, OR OTHER PROFESSIONAL HELP." Sweetdream.ai is clear that its companions "are not real people and should not be considered substitutes for human relationships, professional therapy, or medical advice."

This creates a massive transparency problem. These companies profit from the perception that they offer therapeutic benefits while legally absolving themselves of any responsibility. The lack of regulation for AI mental health applications leaves users in a vulnerable position.

How to Safely Navigate the World of AI Companions

If you're curious about trying an AI companion, it's crucial to go in with your eyes wide open. Based on my deep dive, here is a checklist for evaluating these apps responsibly.

- Scrutinize the Marketing: Be wary of apps making grand claims about "curing" or "treating" mental health issues. Look for honesty about the app's limitations.

- Read the Fine Print (Seriously!): Always read the Terms of Service and Privacy Policy. Look for those "entertainment only" clauses and understand that you are using the app at your own risk.

- Question "Therapy" Features: If an app claims to use CBT or other techniques, ask yourself if there's any evidence or professional oversight mentioned. Often, there isn't.

- Guard Your Privacy: Understand what data the app collects. You're sharing your deepest thoughts; make sure you know where that information is going. AI girlfriend data privacy is a major ethical concern.

- Set Healthy Boundaries: Use the app as a supplement, not a substitute, for real human connection. Be mindful of the risks of AI relationships, such as over-dependency.

- Know When to Get Real Help: An AI cannot manage a crisis. If you are struggling, please reach out to a licensed therapist, counselor, or a crisis hotline. An AI is a tool, not a treatment.

Frequently Asked Questions

How do AI girlfriends affect mental health? ▲

What are the privacy risks with AI companions? ▼

What are the signs of an unhealthy dependency on an AI girlfriend? ▼

Why isn't there more regulation for these apps? ▼

What does the future look like for AI in mental health? ▼

Final Thoughts

AI companions are a powerful and complex new technology. They offer a potential balm for loneliness and a convenient space for emotional expression. However, the industry's lack of AI mental health transparency is a serious issue.

Companies need to be more honest in their marketing and clearer about the limitations of their products. As users, we need to be more critical consumers. We must read the fine print, protect our data, and remember that while a digital companion can offer comfort, it cannot replace the nuanced understanding of a human connection or the skilled guidance of a mental health professional. The conversation is just beginning, and it’s one we all need to be a part of.